When an essay creates a necessary firestorm

Commentary on the Citrini essay

You may have read the recent essay by Citrini Research called, THE 2028 GLOBAL INTELLIGENCE CRISIS: A Thought Exercise in Financial History, from the Future. If not, you should. Just don’t read it before bed.

The piece blew up online commentary, it materially affected the stock market, and it caused all kinds of heated passion in the heads and mouths of normally staid economists, opinion writers, and podcasters with millions of followers. I think it’s all good. Both the essay and the reactions it evoked. It’s the most visible trigger for the conversation we need to have as a society right now.

The essay is a futuristic take on what happens when AI’s capabilities and enterprise adoption continue to accelerate on the trajectory they’re on now. Which is to say, up and to the right.

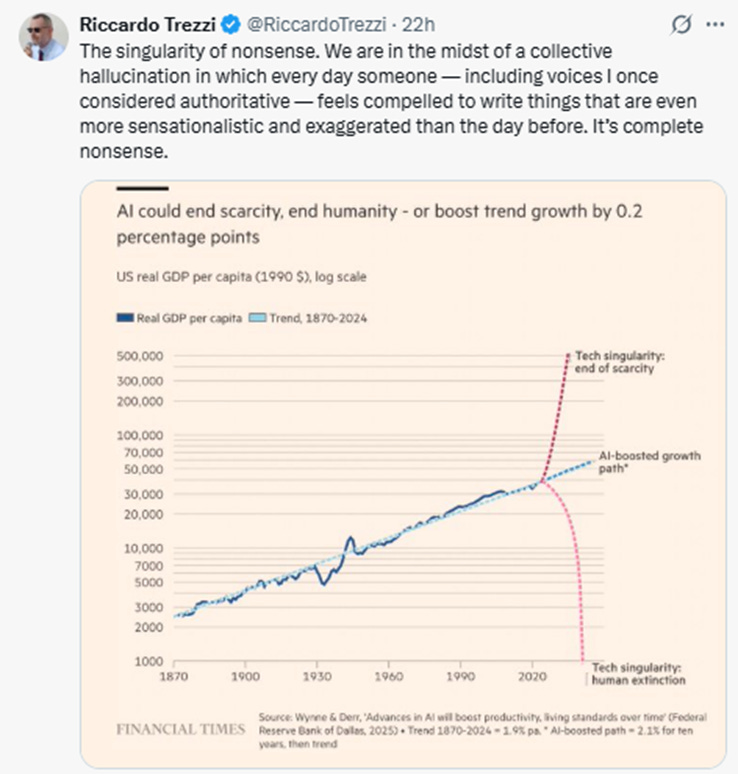

I’m a believer in the blue lines seen below.

If you’re a fan of AI as technology, there is no better time to be alive. It can feel fun, exciting, and that you’re on the leading edge of innovation. Like mainlining adrenaline. If you’re a regular human who has other interests and, more relevantly, an employee at a company in a job that doesn’t involve working directly with AI, you need to buckle up. The essay argues that the trajectory of AI will naturally trigger both more AI-driven improvements and more human unemployment, or at best, human underemployment. And this will naturally affect the entire economy because people will collectively make billions of decisions to forgo purchases they would normally make during times of stable employment.

It’s no surprise that many prominent people jumped out to chastise the authors for publishing “science fiction” meant to scare people for no realistic reasons. Some rebutted that AI will not have the negative impact on employment that the Citrini authors predict because so much of the work that is done by humans cannot easily be done by AI. The usual examples of data dispersion in companies, data integration and normalization, unique talent that appropriately fits specific roles in companies… are all, they say, obstacles to swift AI agent adoption.

No doubt all that is more or less true but can anyone doubt the trajectory of AI at this point? It’s unstoppable and there are zero reasons to believe that organizational constructs and data issues that have persisted in companies for decades means that AI will be hobbled.

Further to that, Ezra Klein of the New York Times had Jack Clark, co-founder of Anthropic, on this episode of his podcast yesterday and it was an illuminating discussion about great and terrible things. (Full disclosure: I am a paid subscriber to Claude and am a big fan of the tech. I did not use it though to write this article). The episode is worth listening to for many reasons but, specifically, for how Jack Clark talks about how companies can adopt AI and AI agents while, at the same time, preserve the dignity of work for humans and ensure they play a role in the future of business transformation on a base of AI. That middle path, AI as amplifier rather than replacement, with deliberate design choices around apprenticeships, governance, and quality assurance, choices made by leaders who actually care about outcomes, is the most important idea discussed in the entire episode IMO, and it gets the least airtime.

All the daily news about AI adoption and human impact is heady stuff and while one camp will argue that there should be no guard rails and AI should be allowed to grow exponentially with few controls… (see: the Musk vision of utopia where goods cost nothing and everyone luxuriates — a vision that never quite explains what people do for purpose, or income, when the machines do everything)… the other camp will argue that the impact on humans needs to be the top consideration and that AI’s growth should be arrested.

My argument is that, as I said, AI is here, it holds promise for being massively beneficial to humanity, and its growth will continue. But I think, too, that there will be significant broad disruption between now and that beneficial future. The Citrini essay is a huge public service because it seems to have triggered people at the highest level. It has kicked off a necessary conversation that should move the topic onto another plane of terror (beyond the usual existential one about AI killing electricity grids or launching nukes) about AI’s broader societal implications beyond job numbers. And it helps us all to move on from this weird worship of tech bros and on to – perhaps - working on solutions that might help everyone – not just the top 1% - transition to the new economies.

For those of us who have spent careers in Customer Success, this conversation is not abstract. CS was built as a human-intensive patch for products that didn’t fully deliver on their promise and sales motions that overstated what they could. AI doesn’t just automate those functions — it attacks the root causes that made them necessary. The senior leaders reading the Citrini essay and nodding along should be asking themselves a harder question than “how do I protect my team?” They should be asking: “if AI eliminates the friction my department was built to manage, what does genuine customer value look like in its absence — and who in my organization is designing for that?”